13 Dec Governance and practical structures to implement ethical AI principles

This lesson serves as the capstone of our AI Ethics course, bridging key ethical concepts with practical action. We will discuss the following:

- Participatory Design & Inclusive Futures

- Cultivating an Ethical Mindset

- Final Project: Developing an AI Ethics Charter

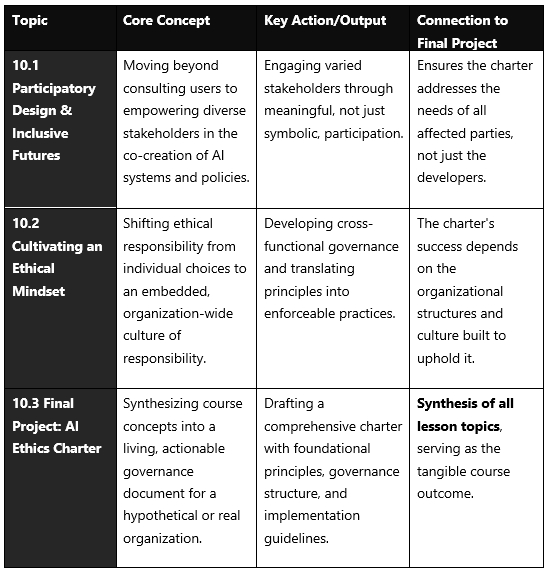

The table below summarizes the core themes you will explore, culminating in a final project that applies this knowledge to create or analyze a real-world AI Ethics Charter.

Participatory Design & Inclusive Futures

This topic emphasizes that ethical AI requires involving people who will be affected by the technology in its design and governance process.

- Beyond Consultation to Empowerment: Current practices are often largely consultative, where stakeholders are asked for discrete feedback on pre-defined choices (like user interfaces) rather than empowered to shape fundamental decisions about data, models, or whether AI should be used at all. True participatory design aims for deeper collaboration or ownership.

- A Framework for Meaningful Participation: To move beyond tokenism, consider these four dimensions when designing participation:

- Why? (Motivation): Is the goal to create a technically better system (instrumental), or is it a moral imperative to include affected groups (normative)?

- What? (Scope): What aspects are stakeholders allowed to influence? Is it just the interface, or the core model, data, and purpose?

- Who? (Stakeholders): Are participants selected by the project team, or is the community itself involved in deciding who represents them? Including marginalized and vulnerable groups is critical for inclusive futures.

- How? (Method): Do methods include co-creation workshops, deliberative forums, or community-led design? Creating “Safe Spaces” for equitable interaction is vital.

- The “Proxy” Trap: Under pressure, teams may use proxies (like stand-ins for users, UX experts as mediators, or algorithms trained on past preferences) to represent stakeholders. These shortcuts risk misrepresenting true needs and values.

Cultivating an Ethical Mindset

This topic focuses on moving from abstract principles to an ingrained organizational culture where ethics is a default practice.

- From Individual to Organizational Ethics: An ethical mindset must be scaled from individual practitioners to the entire organization. This requires establishing clear accountability, identifying who is responsible for AI outcomes, and governance structures with the authority to enforce policies.

- Operationalizing Principles: Core principles like fairness, transparency, and accountability must be translated into concrete actions. For example:

- Fairness: Conducting bias audits using diverse metrics, not just aggregate accuracy.

- Transparency: Investing in Explainable AI (XAI) techniques and documenting data sources and model limitations.

- Accountability: Creating an organizational chart that assigns clear responsibility for each AI system’s development, deployment, and monitoring.

- Leadership and Culture: Ethical AI is a leadership responsibility. Leaders must bridge high-level values (like justice and respect) with technical requirements (like fairness and safety). Building this culture requires cross-functional collaboration, continuous training, and incentivizing ethical behavior alongside technical performance.

Final Project: Developing an AI Ethics Charter

This project is your opportunity to synthesize everything you’ve learned into a single, actionable document. An AI Ethics Charter is a formal declaration of an organization’s commitment to responsible AI, outlining its principles, governance, and implementation plans.

Your charter should include the following key sections:

- Preamble & Purpose: State the organization’s mission and the charter’s objective to ensure AI serves people, society, and the planet.

- Core Ethical Principles: Declare 5-7 foundational principles. Commonly adopted principles include:

- Human Oversight & Control: AI should augment, not replace, human judgment.

- Fairness & Inclusiveness: Actively work to reduce discriminatory bias and promote equitable access.

- Transparency & Explainability: Commit to clarity about AI capabilities, limitations, and decision-making processes.

- Privacy, Security & Robustness: Protect data and ensure systems are secure, reliable, and resilient.

- Accountability: Establish clear lines of responsibility for AI outcomes.

- Societal & Environmental Well-being: Consider broader impacts, including sustainability.

- Governance Structure: Describe how the charter will be enforced.

- AI Governance Committee: A cross-functional team (legal, ethics, technical, business) to oversee strategy and review high-risk projects.

- Clear Roles & Responsibilities: Define who is accountable for auditing, risk management, and incident response.

- Risk Management Process: Integrate a framework like the NIST AI RMF (Govern, Map, Measure, Manage) to identify and mitigate risks throughout the AI lifecycle.

- Implementation & Lifecycle Practices: Outline specific procedures to enact principles.

- Participatory Design Protocols: How will diverse stakeholders be involved in the design process for new AI systems?

- Ethical Impact Assessments: Mandatory assessments before development, during deployment, and periodically thereafter.

- Training & Awareness: Programs to educate all employees, especially developers and leaders, on the charter and ethical AI practices.

- Auditing & Transparency Reports: Plans for regular internal/external audits and public reporting on AI system performance and compliance.

If you liked the tutorial, spread the word and share the link and our website, Studyopedia, with others.

For Videos, Join Our YouTube Channel: Join Now

Read More:

- What is Deep Learning

- Feedforward Neural Networks (FNN)

- Convolutional Neural Network (CNN)

- Recurrent Neural Networks (RNN)

- Long short-term memory (LSTM)

- Generative Adversarial Networks (GANs)

No Comments