13 Dec Governance, Policy, and Building Ethical AI

In this lesson, we will explore the critical governance and practical structures needed to implement ethical AI principles. The framework moves from broad policy strategies to specific tools for practitioners, then examines organizational accountability and the global ecosystem required for responsible AI development.

We will discuss the following:

- The Regulatory Landscape

- Tools for Practitioners

- Organizational Structures

- The Role of Open Source, Standards, and International Cooperation

The Regulatory Landscape

Two contrasting philosophies guide global AI policy: the pro-innovation approach, which aims to foster growth with minimal new legislation, and the precautionary principle, which prioritizes safety by regulating based on potential risks. This tension shapes the regulations you see worldwide.

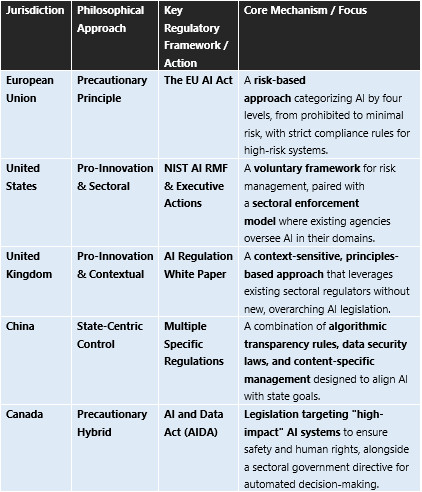

Let us see the regulations of AI worldwide:

The table shows that the EU AI Act is the most comprehensive example of the precautionary principle, while the U.S. NIST framework is the cornerstone of its pro-innovation strategy. Other nations adapt these models to their priorities.

Tools for Practitioners

To turn ethical principles into practice, developers and organizations use specific operational tools.

- AI Auditing & Checklists: Systematic reviews verify that AI systems are compliant, fair, and function as intended. An effective audit reviews training data quality, model fairness, decision explainability, security protocols, and governance documentation.

- Red Teaming: This is a proactive security exercise where a “red team” simulates real-world attacks to find vulnerabilities in an AI system. A structured checklist for red teaming includes stages for planning (scope/rules), execution (simulated attacks), and reporting (vulnerabilities and fixes).

- Impact Assessments: Conducted before deploying high-risk AI, these assessments analyze potential impacts on rights, safety, and society. The EU AI Act requires them for specific high-risk AI applications, like those used in law enforcement.

Organizational Structures

Embedding ethics requires more than tools; it demands formal organizational accountability.

- Ethics Boards & Chief Ethics Officers: A dedicated governance body or executive is crucial. They create and enforce guidelines, resolve ethical dilemmas, and ensure day-to-day operations align with stated principles. This structure “has to have teeth,” meaning real authority to enact consequences.

- Whistleblower Protections: Effective governance requires safe channels for employees to report unethical AI practices internally. The EU AI Act explicitly includes a whistleblower tool to empower secure reporting.

- Clear Accountability Frameworks: Organizations must predefine “a throat to squeeze”; a clear line of responsibility for AI outcomes. This means assigning ownership for each AI model and decision to specific individuals or teams.

The Role of Open Source, Standards, and International Cooperation

Building ethical AI globally depends on collaboration, not just isolated compliance.

- Open Source’s Dual Role: Open-source software is a key enabler of “Sovereign AI”; a nation’s or organization’s ability to develop controlled, independent AI capabilities. It provides transparency, security, and flexibility for customization. A 2025 survey found that 90% of organizations view open source as essential to achieving this sovereignty.

- Standards for Interoperability: Shared technical standards (like those developed by NIST or international bodies) are essential. They help ensure AI systems are robust, evaluable, and can work together safely across borders, reducing market fragmentation.

- International Cooperation: Despite different regulatory approaches, 94% of organizations believe global collaboration on open-source AI is essential. Cooperation can focus on shared research, responsible AI principles, and common evaluation metrics, helping to address universal challenges like bias and safety while navigating geopolitical tensions.

To grasp how these diverse elements interact in practice, you can analyze the AI Act’s risk pyramid and governance requirements below.

Let us see the key Takeaways and Case Studies. To connect these concepts, consider these central tensions and examples:

- Flexibility vs. Certainty: The U.S. sectoral and U.K. contextual models offer flexibility for innovators, while the EU’s detailed rules provide legal certainty for businesses operating in its market.

- Case Study: COMPAS Algorithm: This tool, used in the U.S. justice system to predict recidivism, sparked debate about fairness. Developers argued it was fair because it was equally accurate across racial groups, while investigators found it was wrong in different ways, disproportionately labelling Black defendants as high risk. This highlights how the technical definition of fairness can conflict with societal equity.

If you liked the tutorial, spread the word and share the link and our website, Studyopedia, with others.

For Videos, Join Our YouTube Channel: Join Now

Read More:

- What is Deep Learning

- Feedforward Neural Networks (FNN)

- Convolutional Neural Network (CNN)

- Recurrent Neural Networks (RNN)

- Long short-term memory (LSTM)

- Generative Adversarial Networks (GANs)

No Comments