13 Dec Case Study: Amazon’s 2025 AI Ethics Letter from Employees

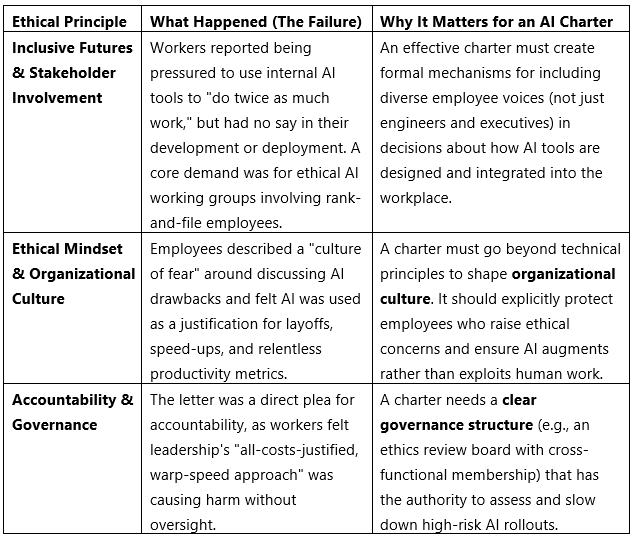

In late 2025, over 1,000 Amazon employees published an open letter raising alarms about the company’s aggressive AI deployment. This is a powerful, real-time example of Participatory Design failing, where the people affected by the technology had no meaningful input into its rollout.

This case shows that ethical failures are not just about biased algorithms, but also about unethical implementation processes that ignore worker welfare and participation.

How to Connect This to Your Final Project

This case provides concrete, current justifications for your proposals:

- Justify Participatory Design: Cite the Amazon case to argue that your charter must include worker councils or stakeholder panels for any AI that changes workflows.

- Mandate Transparency: Referring the case to require that AI decision processes are documented and explainable to affected individuals, not just the technical team.

These examples show that AI ethics is not a solved problem but an urgent, ongoing challenge.

If you liked the tutorial, spread the word and share the link and our website, Studyopedia, with others.

For Videos, Join Our YouTube Channel: Join Now

Read More:

- What is Deep Learning

- Feedforward Neural Networks (FNN)

- Convolutional Neural Network (CNN)

- Recurrent Neural Networks (RNN)

- Long short-term memory (LSTM)

- Generative Adversarial Networks (GANs)

No Comments